Control of Ventral Visual System Neuronal Activity Using Deep Artificial Neural Networks

Post by Elisa Guma

What's the science?

The ventral visual stream is made up of six interconnected cortical brain areas responsible for transforming the light that strikes our retinas into visual representations, allowing us to recognize objects and their relationships in the world. In order to better understand this complex visual processing system, neuroscientists have built computational models. Recent advances have allowed for better and more precise models —using deep artificial neural networks (ANN) — which allow for each brain area within the ventral visual stream to be coded as a layer in the network. This week in Science, Bashivan and colleagues investigated the use and limitations of ANNs as models of neural processing in the nonhuman primate visual system V4 layer.

How did they do it?

In order to record neural activity in the visual cortex of awake macaques, the authors implanted micro-electrode arrays with 96 electrodes into the V4 layer of the left and right visual cortex of 3 monkeys. The area recorded by each of the 96 electrodes is referred to as a “neural site” and was only included in analyses if it maintained a stable response over the 3 days of experiments. On day 1, the authors determined the receptive field of each neural site using 640 naturalistic images and 370 “complex-curvature” images (computer generated) known to drive activity of V4 neurons. They were then able to use the neural response to 90% of these images to create a mapping from a “deep layer” (one of the processing layers between input and output in the neural network model) of the ANN to the neural responses; the remaining 10% of images were used to test accuracy of the model-to-brain mapping.

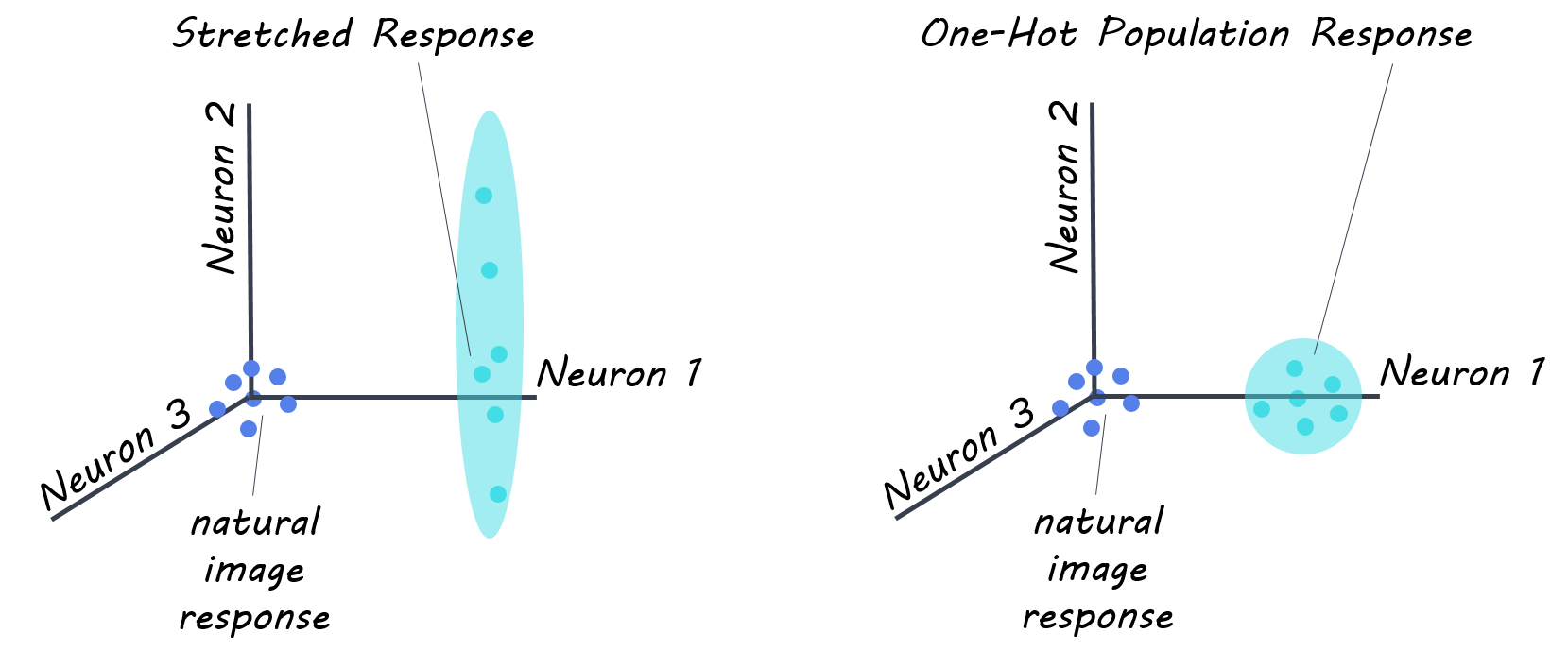

The next day, the authors performed a “stretch” control experiment, in which they instructed their model to drive the firing rate of one neural site as high as possible using a synthesized image (pattern of light) generated by the ANN. This control allowed for optimization of the response to each V4 site individually, without regard for the rest of the neural population. To investigate the system as a whole, on the third day, authors conducted a neural population state control referred to as “one-hot-population” control, to see if they ANN could generate images that would drive the response of one neural site, while simultaneously keeping the responses of all other sites low. These two controls allowed the authors to test the limitations of their model.

What did they find?

The authors recorded from 107 reliable sites for the ANN-mapping day, (52, 33, and 22 from each of the three monkeys respectively), 76 for the stretch control experiments (38, 19, 19 in each monkey), and 57 for the one-hot-population control experiment (38, 19 in each of two monkeys). First, they found their neural prediction model correctly predicted 89% of the V4 neural responses to the presented images. In their first control experiment, the “stretch” control, authors found that the algorithm generated images were able to produce firing rates 39% higher than the maximal firing rate occurring in neural sites when presented naturalistic images. This suggests that their ANN model was able to discover pixel arrangements that were better drivers of V4 visual cortex neurons. Finally, in the “one-hot-population” control experiment, the authors found that images did not achieve perfect population control (i.e. drive activity of one site only, while maintaining others at baseline), however, they did find that they were able to induce enhanced activity in the target site (by ~57% in 76% of sites) without too much of an increase in the off-target sites. This suggests that their ANN model is able to achieve better population control over the V4 neuronal population than previously possible.

What's the impact?

These experiments show that ANN models can be used to generate images that drive firing rates at many V4 neural sites, and that these sites (even if they have overlapping receptive fields) can be partly independently controlled. The results suggest that the model has strong neuron-by-neuron functional similarity to the brain’s ventral visual stream (V4), suggesting that ANN models, although not perfect, may give new ability to find optimal stimuli to study neural systems in finer detail, unconstrained by limits of human language and intuition. This will in turn aid in our understanding of how the ventral visual system works.

Bashivan et al. Neural population control via deep image synthesis. Science (2019). Access the original scientific publication here.