The Effects of Social Distancing on Body and Brain

Post by Anastasia Sares

What’s the science?

Humans evolved to be social with one another, and we function best when we have strong relationships and regular social contact. However, in many cities, half or more of the inhabitants live alone, and in the current COVID-19 pandemic, people are additionally deprived of in-person interactions at work and social gatherings. It is a good time to remind ourselves of the far-reaching impacts of loneliness and find ways to mitigate it. This week in Trends in Cognitive Sciences, Bzdok and Dunbar reviewed the consequences of social isolation and what we know about its neurobiology.

What do we already know?

Social connectivity is a huge factor in life expectancy. Social isolation increases the risk of dying within the next decade by 25%. The death of someone close, like a spouse, increases the likelihood of death in the immediate future by more than 15%. Severe social deprivation also shortens our telomeres, which are like caps on the DNA of every cell. Shortening telomeres are linked to aging.

Social connectivity is also related to immune function and physical health. In both humans and other primates, social belonging is related to stronger immune responses, faster wound healing, better regulation of stress hormones, lower systolic blood pressure, lower body mass index, and less inflammation. Finally, social connectivity protects against depression. People with a history of depression are 25% less likely to become depressed again if they belong to one social group (like a sports club, church, hobby group, or charity). If they belong to three social groups, their risk is decreased by around 67%.

One caveat for many of these large-scale human studies is that they involve correlation instead of causation. For example, if social isolation and body-mass index (weighing more for your height) are correlated, does it mean that social isolation leads to a higher body-mass index, or that having a higher body-mass index leads to social isolation? However, accumulating evidence from many different fields seems to indicate that loneliness is detrimental to our well-being.

What’s new?

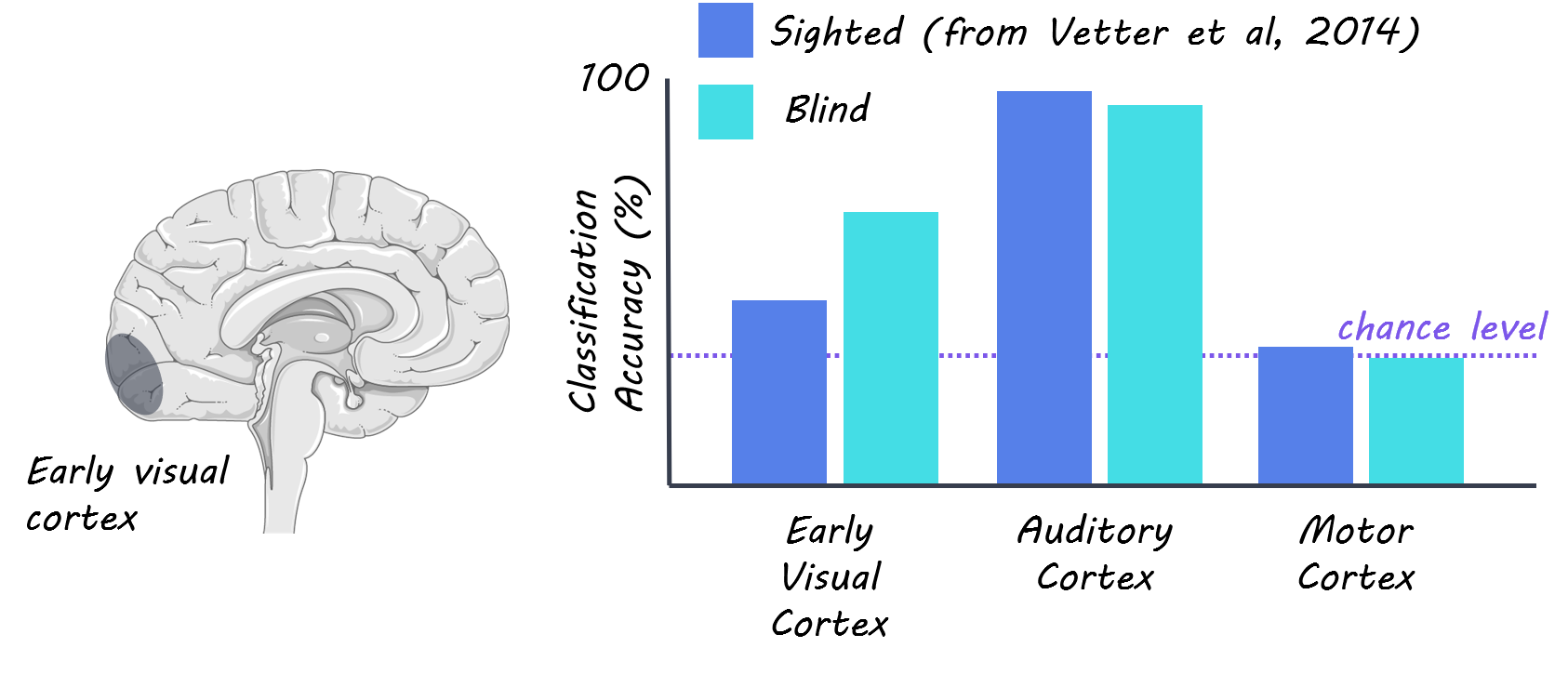

We now understand a little better what’s going on in the brain. Advances in neuroscience have shown that social cognition recruits areas such as the default mode network (related to identity, reflection, etc.) and the limbic system (involved in emotion, motivation, and threat processing). Social isolation affects the brain just as much as the body—the shape and size of the limbic system change with our level of social isolation, and it also affects communication within the default mode network, and between the default mode network and the limbic system.

Meanwhile, our social lives have gone digital. Research shows that people’s social tendencies are similar online. We seek out social interaction with the same frequency and have a similar social network as in real life. The problem with online interaction is that it is lower quality: until the rise of video chats, we couldn’t even pick up on facial expression and body language, which are important nonverbal cues. Synchronous behavior is still a challenge because of short delays in communication—as anyone who has tried to sing “Happy Birthday” in a conference call will know. Synchronous activities like team rowing or singing in a choir promote bonding in ways similar to physical touch and grooming and can help to prevent or reduce feelings of isolation. In short, nothing can fully replace face-to-face interaction, but digital communication does help to alleviate loneliness to some degree.

What's the bottom line?

We must take our social connections seriously, individually, and as a society. During times of social isolation like the current pandemic, this is especially important, but trends of urban living and aging populations mean that it is an issue we will be dealing with for years to come. Community organizations and hobby groups are crucial to preserving social interaction and community in this regard, as they can help to protect against social isolation.

Bzdok and Dunbar. The Neurobiology of Social Distance. Trends in Cognitive Sciences (2020). Access the original scientific publication here.